Observability Isn’t Just Logs — It’s a Feedback Loop

Categories:

[observability],

[engineering],

[cto-journal]

Tags:

[elastic],

[akamas],

[telemetry],

[optimization],

[devops],

[ai-tuning]

Most people don’t connect observability and autonomous tuning.

To be honest, neither did I — not at first.

When you’ve been around as long as I have, you start seeing trends repeat themselves.

The “monitoring revolution” wasn’t the first. Before observability became a buzzword, we had a decade of “log everything”. Before that, SNMP traps and MRTG graphs.

They were always about watching. Never doing.

Akamas entered our world from a completely different door: performance optimization.

It was built to find optimal configuration values for complex systems — JVM heap sizes, thread pools, container resources — without trial-and-error guesswork.

Nothing about it screamed “observability tool”.

So how did it end up inside our observability stack?

That’s the story.

From “Monitoring” to “Observability” to… Something Else

The typical observability architecture in 2025 looks something like this:

- Collectors: Filebeat, Metricbeat, sometimes OpenTelemetry for traces.

- Ingestion and enrichment: Logstash adding metadata like node role, service name, env tag.

- Storage and search: Elasticsearch for logs and metrics, maybe Prometheus for time-series.

- Dashboards: Grafana or Kibana to make it all pretty.

This is fine — until you ask the question:

“So… what do we actually do with this information?”

And the room goes quiet.

The Gap We Kept Falling Into

Every post-incident review went like this:

- We saw the problem in a dashboard (yay).

- We found the cause in logs or metrics (slower yay).

- We made a change to fix it (ugh, manual).

And the truth is — that last step was always manual.

Even if we automated deployment, even if we had IaC, someone had to decide what to change and by how much.

And “someone” was usually guessing based on gut feel.

We were using observability for diagnosis but never prescription.

That’s when I realised we were running a one-way feedback system.

Enter Akamas — But Not For the Reason You Think

Akamas came into the picture on a completely different project: tuning Java workloads for an analytics platform.

The goal was simple: reduce infrastructure costs without killing performance.

Akamas did its job — brilliantly. It ran experiments, adjusted parameters, and found sweet spots we wouldn’t have tried ourselves.

Then something clicked:

Every experiment Akamas ran was based on metrics we already had… from our observability stack.

In other words:

- Observability was giving us the signals.

- Akamas was designed to act on those signals.

- All we needed to do was close the loop.

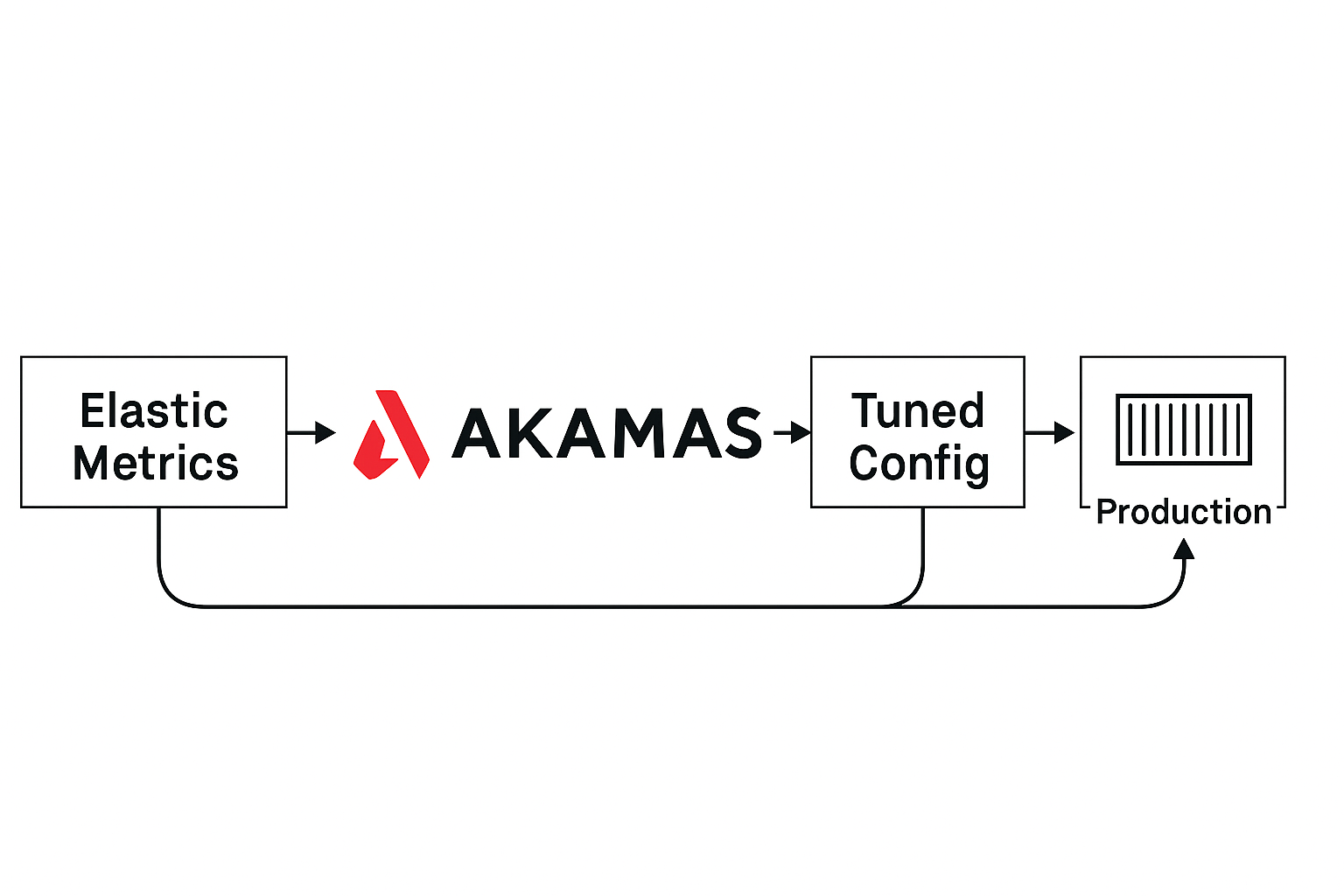

The Observability Feedback Loop — Now With Brains

Here’s what we built:

- Collect: Filebeat + Metricbeat ship structured logs and metrics to Logstash.

- Enrich: Logstash adds metadata —

service,role,env,region. - Correlate: Elastic links logs, metrics, traces into unified events.

- Surface: Dashboards show KPIs tied to business goals (e.g., “Checkout latency p95”).

- Analyse & Tune: Akamas reads KPIs and runs tuning studies.

- Apply: Winning configs are pushed via CI/CD or Rudder.

- Verify: New metrics flow back into Elastic — completing the loop.

How Akamas Was Actually Integrated and Tested

This wasn’t a “flip the switch in production” move.

Akamas was first deployed on a dedicated optimization node in our staging environment.

We configured it to:

- Pull KPIs directly from Elasticsearch using API queries.

- Focus on specific tuning targets: Elasticsearch heap size, Logstash worker count, Beats batch sizes.

- Run bounded experiments with strict safety constraints.

The first study ran for 48 hours in staging with real production-like load.

Akamas tried multiple parameter combinations, automatically rolling back changes that degraded latency or increased CPU above thresholds.

Once the best configuration was identified:

- Results were validated for another 24 hours.

- The new configuration was committed into our CI/CD pipeline.

- Deployment was done via Rudder, ensuring every node applied the same tuned parameters.

Only after this validation did Akamas become part of the live observability feedback loop.

Why This Matters (And Why Nobody Does It)

Most teams treat observability as passive.

It’s there to tell you what went wrong — not to decide what to do next.

By integrating Akamas, we shifted from passive monitoring to active optimization:

- No waiting for humans to “notice” a trend and manually tweak configs.

- No overprovisioning “just in case”.

- No guessing how changes will impact the system.

Akamas runs targeted experiments with safety constraints.

If an experiment worsens performance, it rolls back automatically.

If it improves, we commit it to production.

A Real Example: Elasticsearch Under Load

We once had a cluster suffering from:

- High query latency during index refreshes.

- Spikes in heap usage causing slow garbage collection.

- Operators manually tuning JVM heap and refresh intervals — with mixed results.

We gave Akamas the following:

- Parameters to tune:

es.heap.size,index.refresh_interval,bulk.thread_pool.size - Objectives:

- Keep p95 query latency under 120ms.

- Maintain heap usage below 80%.

- Reduce CPU by 15%.

Akamas tested combinations over real workloads for 36 hours.

The result:

- Latency dropped 27%.

- CPU reduced 18%.

- Heap stayed under control.

- Changes rolled out via Rudder in under 10 minutes.

The Skeptic’s View

I’ll admit — this sounds futuristic.

It’s not common practice in 2025.

Even experienced engineers will ask:

“Why would you let an optimizer near production configs?”

The answer is: because the alternative is manual tuning forever.

And manual tuning is slow, error-prone, and expensive.

The integration isn’t magic — it’s just connecting two things you already have:

- Telemetry

- An optimization engine

The difference is that nobody thinks to connect them.

Lessons Learned

- Observability without action is a dead end.

- Akamas without real-time data is underutilized.

- Together, they form a closed loop: detect → decide → act → verify.

- The hardest part is cultural — trusting the system to make safe changes.

TL;DR

Observability is no longer about staring at dashboards.

It’s about building a system that sees, decides, and acts — without waiting for you.